PrecisionFDA

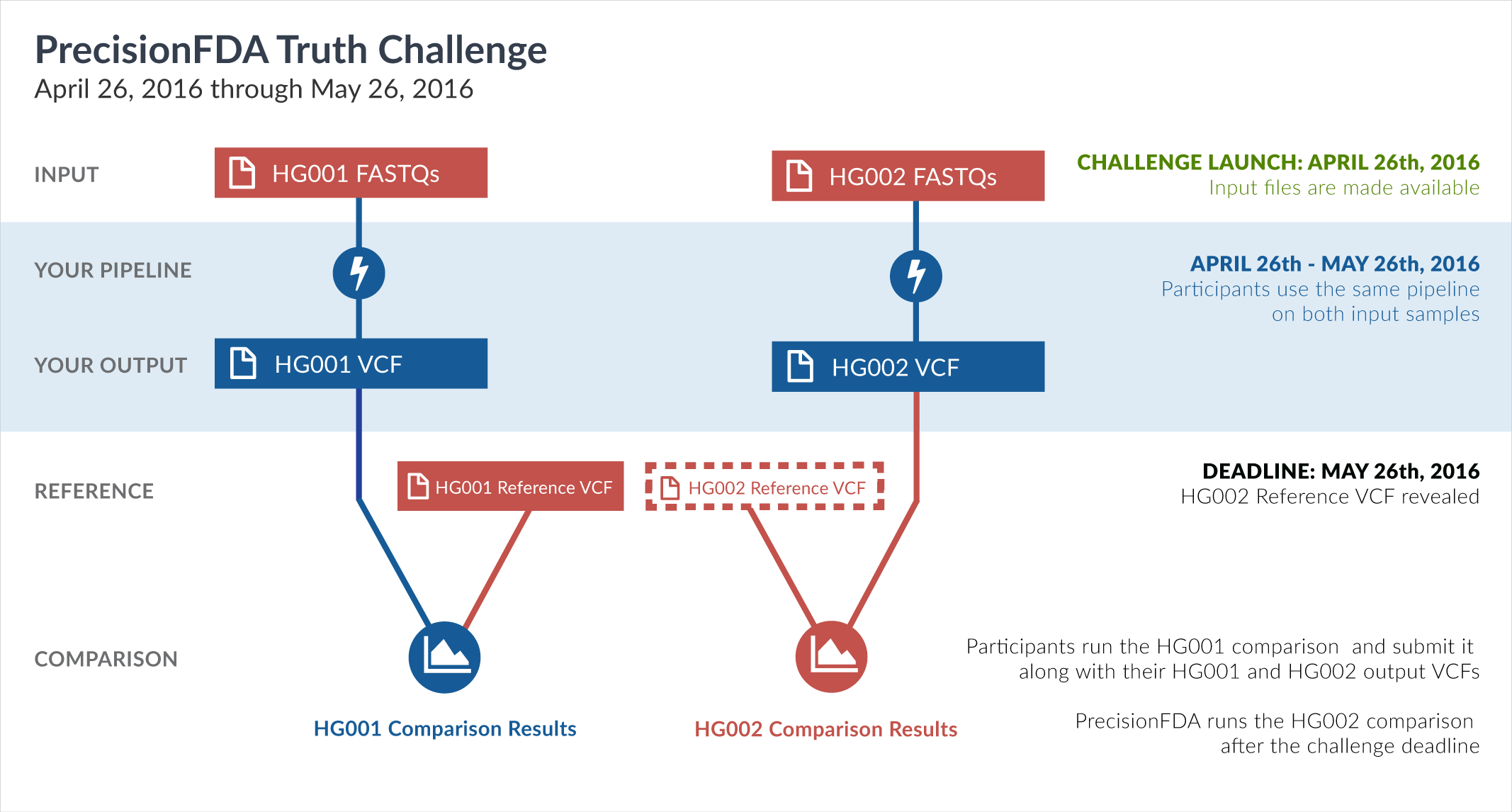

Truth Challenge

Engage and improve DNA test results with our community challenges

Explore HG002 comparison results

Use this interactive explorer to filter all results across submission entries and multiple dimensions.

| Entry | Type | Subtype | Subset | Genotype | F-score | Recall | Precision | Frac_NA | Truth TP | Truth FN | Query TP | Query FP | FP gt | % FP ma | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

851-900 / 86044 show all | |||||||||||||||

| raldana-dualsentieon | INDEL | D1_5 | map_l250_m2_e0 | hetalt | 100.0000 | 100.0000 | 100.0000 | 97.3684 | 3 | 0 | 3 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D1_5 | map_l250_m2_e0 | homalt | 97.4359 | 95.0000 | 100.0000 | 93.9937 | 57 | 3 | 57 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D1_5 | map_l250_m2_e1 | hetalt | 100.0000 | 100.0000 | 100.0000 | 97.4576 | 3 | 0 | 3 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D1_5 | map_l250_m2_e1 | homalt | 97.4359 | 95.0000 | 100.0000 | 94.1418 | 57 | 3 | 57 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D1_5 | map_siren | hetalt | 96.2963 | 92.8571 | 100.0000 | 89.3151 | 78 | 6 | 78 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D1_5 | segdup | hetalt | 99.0291 | 98.0769 | 100.0000 | 94.9219 | 51 | 1 | 52 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D1_5 | segdupwithalt | * | 100.0000 | 100.0000 | 100.0000 | 99.9934 | 1 | 0 | 1 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D1_5 | segdupwithalt | het | 100.0000 | 100.0000 | 100.0000 | 99.9895 | 1 | 0 | 1 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D1_5 | segdupwithalt | hetalt | 0.0000 | 100.0000 | 0 | 0 | 0 | 0 | 0 | ||||

| raldana-dualsentieon | INDEL | D1_5 | segdupwithalt | homalt | 0.0000 | 100.0000 | 0 | 0 | 0 | 0 | 0 | ||||

| raldana-dualsentieon | INDEL | D1_5 | tech_badpromoters | * | 97.2973 | 94.7368 | 100.0000 | 45.4545 | 18 | 1 | 18 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D1_5 | tech_badpromoters | het | 93.3333 | 87.5000 | 100.0000 | 53.3333 | 7 | 1 | 7 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D1_5 | tech_badpromoters | hetalt | 100.0000 | 100.0000 | 100.0000 | 0.0000 | 2 | 0 | 2 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D1_5 | tech_badpromoters | homalt | 100.0000 | 100.0000 | 100.0000 | 43.7500 | 9 | 0 | 9 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | HG002compoundhet | hetalt | 96.4689 | 93.1788 | 100.0000 | 23.4743 | 7595 | 556 | 7599 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | decoy | * | 100.0000 | 100.0000 | 100.0000 | 99.8811 | 1 | 0 | 1 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | decoy | het | 0.0000 | 100.0000 | 0 | 0 | 0 | 0 | 0 | ||||

| raldana-dualsentieon | INDEL | D6_15 | decoy | hetalt | 100.0000 | 100.0000 | 100.0000 | 99.0099 | 1 | 0 | 1 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | decoy | homalt | 0.0000 | 100.0000 | 0 | 0 | 0 | 0 | 0 | ||||

| raldana-dualsentieon | INDEL | D6_15 | func_cds | * | 100.0000 | 100.0000 | 100.0000 | 51.1364 | 43 | 0 | 43 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | func_cds | het | 100.0000 | 100.0000 | 100.0000 | 46.2963 | 29 | 0 | 29 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | func_cds | hetalt | 100.0000 | 100.0000 | 100.0000 | 60.0000 | 2 | 0 | 2 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | func_cds | homalt | 100.0000 | 100.0000 | 100.0000 | 58.6207 | 12 | 0 | 12 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_AllRepeats_gt200bp_gt95identity_merged | * | 100.0000 | 100.0000 | 100.0000 | 96.1783 | 6 | 0 | 6 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_AllRepeats_gt200bp_gt95identity_merged | het | 100.0000 | 100.0000 | 100.0000 | 96.7742 | 3 | 0 | 3 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_AllRepeats_gt200bp_gt95identity_merged | hetalt | 100.0000 | 100.0000 | 100.0000 | 89.4737 | 2 | 0 | 2 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_AllRepeats_gt200bp_gt95identity_merged | homalt | 100.0000 | 100.0000 | 100.0000 | 97.7778 | 1 | 0 | 1 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_AllRepeats_lt51bp_gt95identity_merged | hetalt | 97.6332 | 95.3758 | 100.0000 | 31.9545 | 6497 | 315 | 6514 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_51to200bp_gt95identity_merged | hetalt | 91.6667 | 84.6154 | 100.0000 | 57.1429 | 33 | 6 | 33 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_gt200bp_gt95identity_merged | * | 100.0000 | 100.0000 | 100.0000 | 96.9925 | 4 | 0 | 4 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_gt200bp_gt95identity_merged | het | 100.0000 | 100.0000 | 100.0000 | 97.4684 | 2 | 0 | 2 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_gt200bp_gt95identity_merged | hetalt | 100.0000 | 100.0000 | 100.0000 | 84.6154 | 2 | 0 | 2 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_gt200bp_gt95identity_merged | homalt | 0.0000 | 100.0000 | 0 | 0 | 0 | 0 | 0 | ||||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt101bp_gt95identity_merged | hetalt | 97.1963 | 94.5455 | 100.0000 | 61.5942 | 52 | 3 | 53 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt51bp_gt95identity_merged | hetalt | 96.8750 | 93.9394 | 100.0000 | 61.9048 | 31 | 2 | 32 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_51to200bp_gt95identity_merged | hetalt | 91.7274 | 84.7190 | 100.0000 | 29.6719 | 1447 | 261 | 1479 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_gt200bp_gt95identity_merged | * | 100.0000 | 100.0000 | 100.0000 | 91.6667 | 2 | 0 | 2 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_gt200bp_gt95identity_merged | het | 100.0000 | 100.0000 | 100.0000 | 92.8571 | 1 | 0 | 1 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_gt200bp_gt95identity_merged | hetalt | 0.0000 | 100.0000 | 0 | 0 | 0 | 0 | 0 | ||||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_gt200bp_gt95identity_merged | homalt | 100.0000 | 100.0000 | 100.0000 | 75.0000 | 1 | 0 | 1 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_lt101bp_gt95identity_merged | hetalt | 96.2736 | 92.8150 | 100.0000 | 26.1913 | 6601 | 511 | 6645 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_lt51bp_gt95identity_merged | hetalt | 97.6184 | 95.3476 | 100.0000 | 25.5017 | 5185 | 253 | 5197 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_all_gt95identity_merged | hetalt | 96.2695 | 92.8074 | 100.0000 | 26.8341 | 6658 | 516 | 6702 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_SimpleRepeat_diTR_11to50 | hetalt | 97.3984 | 94.9288 | 100.0000 | 24.8387 | 4530 | 242 | 4542 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_SimpleRepeat_diTR_51to200 | hetalt | 83.1099 | 71.1009 | 100.0000 | 26.0069 | 620 | 252 | 643 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_SimpleRepeat_diTR_gt200 | * | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_SimpleRepeat_diTR_gt200 | het | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_SimpleRepeat_diTR_gt200 | hetalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_SimpleRepeat_diTR_gt200 | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_SimpleRepeat_homopolymer_6to10 | * | 99.4329 | 98.8722 | 100.0000 | 81.0382 | 263 | 3 | 263 | 0 | 0 | ||