PrecisionFDA

Truth Challenge

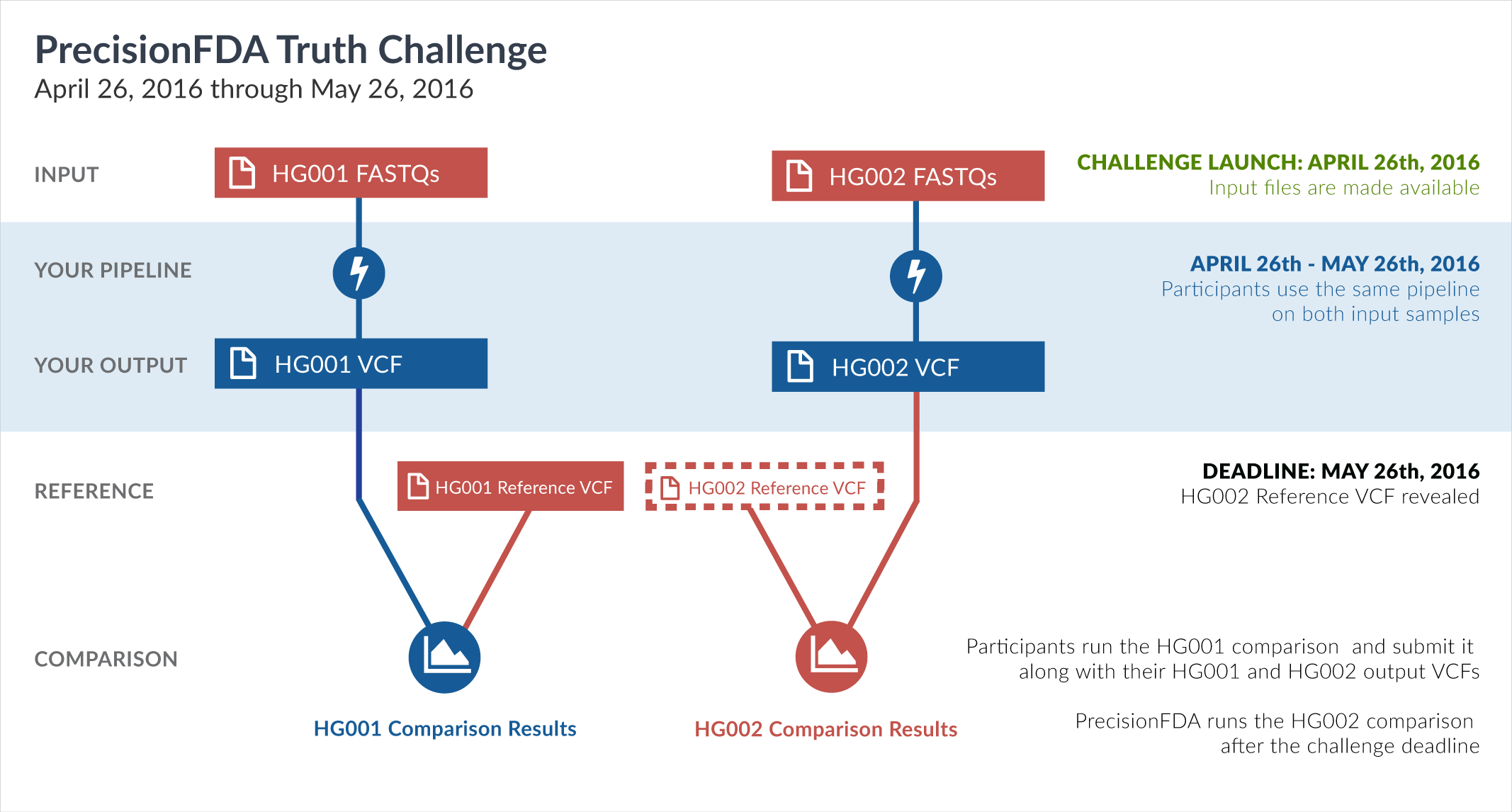

Engage and improve DNA test results with our community challenges

Explore HG002 comparison results

Use this interactive explorer to filter all results across submission entries and multiple dimensions.

| Entry | Type | Subtype | Subset | Genotype | F-score | Recall | Precision | Frac_NA | Truth TP | Truth FN | Query TP | Query FP | FP gt | % FP ma | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

33451-33500 / 86044 show all | |||||||||||||||

| raldana-dualsentieon | INDEL | D16_PLUS | lowcmp_SimpleRepeat_triTR_11to50 | het | 98.3329 | 98.3607 | 98.3051 | 75.0000 | 60 | 1 | 58 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D16_PLUS | lowcmp_SimpleRepeat_triTR_51to200 | het | 78.9474 | 75.0000 | 83.3333 | 88.4615 | 6 | 2 | 5 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D16_PLUS | lowcmp_SimpleRepeat_triTR_51to200 | homalt | 96.2963 | 100.0000 | 92.8571 | 41.6667 | 13 | 0 | 13 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D1_5 | * | hetalt | 96.8333 | 93.8702 | 99.9896 | 62.1760 | 9617 | 628 | 9660 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D1_5 | HG002complexvar | hetalt | 97.5400 | 95.2663 | 99.9249 | 72.3364 | 1288 | 64 | 1331 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D1_5 | HG002compoundhet | hetalt | 96.8240 | 93.8528 | 99.9896 | 57.7055 | 9588 | 628 | 9588 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D1_5 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt101bp_gt95identity_merged | het | 99.2916 | 98.8069 | 99.7809 | 75.8402 | 911 | 11 | 911 | 2 | 1 | 50.0000 | |

| raldana-dualsentieon | INDEL | D1_5 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt51bp_gt95identity_merged | * | 99.6226 | 99.3415 | 99.9054 | 80.1278 | 1056 | 7 | 1056 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D1_5 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt51bp_gt95identity_merged | homalt | 99.8647 | 100.0000 | 99.7297 | 81.5645 | 369 | 0 | 369 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D1_5 | map_l100_m0_e0 | het | 98.0506 | 97.8003 | 98.3022 | 82.9226 | 578 | 13 | 579 | 10 | 1 | 10.0000 | |

| raldana-dualsentieon | INDEL | D1_5 | map_l150_m0_e0 | * | 97.4141 | 97.5779 | 97.2509 | 89.7535 | 282 | 7 | 283 | 8 | 1 | 12.5000 | |

| raldana-dualsentieon | INDEL | D1_5 | map_l150_m0_e0 | homalt | 97.6190 | 96.4706 | 98.7952 | 89.0933 | 82 | 3 | 82 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D1_5 | map_l150_m1_e0 | homalt | 98.4479 | 97.3684 | 99.5516 | 86.1491 | 222 | 6 | 222 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D1_5 | map_l150_m2_e0 | homalt | 98.5386 | 97.5207 | 99.5781 | 86.9780 | 236 | 6 | 236 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D1_5 | map_l250_m1_e0 | * | 96.1877 | 95.9064 | 96.4706 | 94.3428 | 164 | 7 | 164 | 6 | 1 | 16.6667 | |

| raldana-dualsentieon | INDEL | D1_5 | map_l250_m1_e0 | het | 95.5357 | 96.3964 | 94.6903 | 94.5725 | 107 | 4 | 107 | 6 | 1 | 16.6667 | |

| raldana-dualsentieon | INDEL | D1_5 | map_l250_m2_e0 | * | 96.4578 | 96.1957 | 96.7213 | 94.6460 | 177 | 7 | 177 | 6 | 1 | 16.6667 | |

| raldana-dualsentieon | INDEL | D1_5 | map_l250_m2_e0 | het | 95.9016 | 96.6942 | 95.1220 | 94.7771 | 117 | 4 | 117 | 6 | 1 | 16.6667 | |

| raldana-dualsentieon | INDEL | D1_5 | map_l250_m2_e1 | * | 96.4770 | 96.2162 | 96.7391 | 94.7489 | 178 | 7 | 178 | 6 | 1 | 16.6667 | |

| raldana-dualsentieon | INDEL | D1_5 | map_l250_m2_e1 | het | 95.9350 | 96.7213 | 95.1613 | 94.8612 | 118 | 4 | 118 | 6 | 1 | 16.6667 | |

| raldana-dualsentieon | INDEL | D1_5 | map_siren | het | 99.0760 | 98.8142 | 99.3392 | 78.2192 | 2250 | 27 | 2255 | 15 | 1 | 6.6667 | |

| raldana-dualsentieon | INDEL | D6_15 | * | hetalt | 96.4666 | 93.1857 | 99.9870 | 32.1144 | 7617 | 557 | 7666 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | HG002complexvar | hetalt | 97.2668 | 94.7680 | 99.9010 | 47.1204 | 960 | 53 | 1009 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_AllRepeats_51to200bp_gt95identity_merged | hetalt | 92.1644 | 85.5030 | 99.9515 | 30.1491 | 2023 | 343 | 2061 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331 | hetalt | 96.4144 | 93.0885 | 99.9868 | 28.9050 | 7502 | 557 | 7550 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_51to200bp_gt95identity_merged | homalt | 98.8506 | 100.0000 | 97.7273 | 76.5957 | 43 | 0 | 43 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt101bp_gt95identity_merged | homalt | 99.7333 | 100.0000 | 99.4681 | 59.3514 | 374 | 0 | 374 | 2 | 1 | 50.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt51bp_gt95identity_merged | het | 99.4851 | 99.1786 | 99.7934 | 64.4901 | 483 | 4 | 483 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt51bp_gt95identity_merged | homalt | 99.7093 | 100.0000 | 99.4203 | 57.3020 | 343 | 0 | 343 | 2 | 1 | 50.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_Human_Full_Genome_TRDB_hg19_150331_all_merged | hetalt | 96.4144 | 93.0885 | 99.9868 | 28.9050 | 7502 | 557 | 7550 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_SimpleRepeat_quadTR_51to200 | hetalt | 96.7499 | 93.8209 | 99.8677 | 23.9437 | 744 | 49 | 755 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_SimpleRepeat_triTR_11to50 | homalt | 99.8873 | 100.0000 | 99.7748 | 33.9286 | 443 | 0 | 443 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_SimpleRepeat_triTR_51to200 | * | 97.8571 | 96.4789 | 99.2754 | 41.7722 | 137 | 5 | 137 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | lowcmp_SimpleRepeat_triTR_51to200 | het | 95.8333 | 95.8333 | 95.8333 | 71.7647 | 23 | 1 | 23 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_l100_m1_e0 | het | 96.4143 | 96.0317 | 96.8000 | 86.3983 | 121 | 5 | 121 | 4 | 1 | 25.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_l100_m1_e0 | homalt | 98.4375 | 98.4375 | 98.4375 | 84.1975 | 63 | 1 | 63 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_l100_m2_e0 | het | 95.7529 | 94.6565 | 96.8750 | 86.7632 | 124 | 7 | 124 | 4 | 1 | 25.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_l100_m2_e0 | homalt | 98.4615 | 98.4615 | 98.4615 | 84.9188 | 64 | 1 | 64 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_l100_m2_e1 | het | 95.8801 | 94.8148 | 96.9697 | 86.5990 | 128 | 7 | 128 | 4 | 1 | 25.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_l100_m2_e1 | homalt | 98.5075 | 98.5075 | 98.5075 | 84.8073 | 66 | 1 | 66 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_l125_m1_e0 | * | 97.3913 | 95.7265 | 99.1150 | 87.6096 | 112 | 5 | 112 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_l125_m1_e0 | het | 96.8254 | 95.3125 | 98.3871 | 89.1986 | 61 | 3 | 61 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_l125_m2_e0 | * | 96.7480 | 94.4444 | 99.1667 | 87.9154 | 119 | 7 | 119 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_l125_m2_e0 | het | 95.6522 | 92.9577 | 98.5075 | 89.2456 | 66 | 5 | 66 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_l125_m2_e1 | * | 96.3855 | 93.7500 | 99.1736 | 88.0788 | 120 | 8 | 120 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_l125_m2_e1 | het | 95.6522 | 92.9577 | 98.5075 | 89.4155 | 66 | 5 | 66 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_siren | het | 97.4729 | 96.4286 | 98.5401 | 83.9390 | 270 | 10 | 270 | 4 | 1 | 25.0000 | |

| raldana-dualsentieon | INDEL | D6_15 | map_siren | homalt | 98.8506 | 99.2308 | 98.4733 | 81.7803 | 129 | 1 | 129 | 2 | 1 | 50.0000 | |

| raldana-dualsentieon | INDEL | I16_PLUS | * | hetalt | 94.2306 | 89.1325 | 99.9472 | 55.4588 | 1870 | 228 | 1892 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | I16_PLUS | HG002complexvar | het | 98.7816 | 97.7444 | 99.8410 | 62.2675 | 650 | 15 | 628 | 1 | 1 | 100.0000 | |