PrecisionFDA

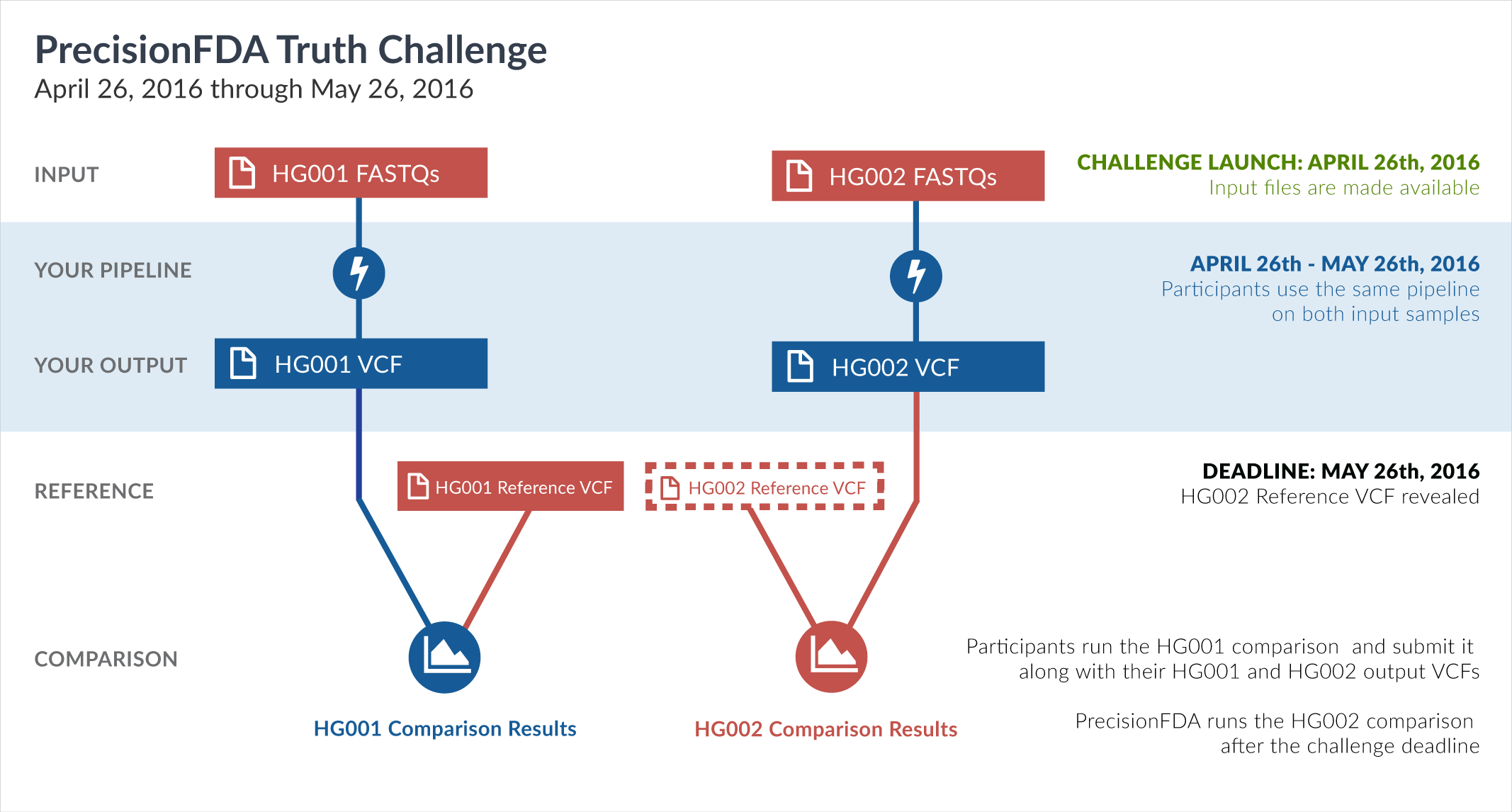

Truth Challenge

Engage and improve DNA test results with our community challenges

Explore HG002 comparison results

Use this interactive explorer to filter all results across submission entries and multiple dimensions.

| Entry | Type | Subtype | Subset | Genotype | F-score | Recall | Precision | Frac_NA | Truth TP | Truth FN | Query TP | Query FP | FP gt | % FP ma | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

52151-52200 / 86044 show all | |||||||||||||||

| qzeng-custom | SNP | * | lowcmp_SimpleRepeat_triTR_51to200 | * | 70.5882 | 66.6667 | 75.0000 | 98.1043 | 6 | 3 | 6 | 2 | 1 | 50.0000 | |

| qzeng-custom | SNP | * | lowcmp_SimpleRepeat_triTR_51to200 | homalt | 0.0000 | 0.0000 | 96.9697 | 0 | 2 | 0 | 2 | 1 | 50.0000 | ||

| qzeng-custom | SNP | * | tech_badpromoters | * | 96.8273 | 98.0892 | 95.5975 | 47.8689 | 154 | 3 | 152 | 7 | 1 | 14.2857 | |

| qzeng-custom | SNP | * | tech_badpromoters | homalt | 98.0970 | 97.5000 | 98.7013 | 46.1538 | 78 | 2 | 76 | 1 | 1 | 100.0000 | |

| qzeng-custom | SNP | ti | func_cds | het | 99.7296 | 99.8589 | 99.6007 | 30.7386 | 8492 | 12 | 8481 | 34 | 1 | 2.9412 | |

| qzeng-custom | SNP | ti | lowcmp_AllRepeats_lt51bp_gt95identity_merged | hetalt | 83.3333 | 83.3333 | 83.3333 | 85.7143 | 5 | 1 | 5 | 1 | 1 | 100.0000 | |

| qzeng-custom | SNP | ti | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt51bp_gt95identity_merged | het | 99.2117 | 99.6203 | 98.8065 | 58.3355 | 1574 | 6 | 1573 | 19 | 1 | 5.2632 | |

| qzeng-custom | SNP | ti | lowcmp_SimpleRepeat_homopolymer_6to10 | het | 99.6434 | 99.5326 | 99.7544 | 48.4618 | 4046 | 19 | 4061 | 10 | 1 | 10.0000 | |

| qzeng-custom | SNP | ti | lowcmp_SimpleRepeat_homopolymer_6to10 | homalt | 99.7726 | 99.6365 | 99.9090 | 41.7859 | 2193 | 8 | 2195 | 2 | 1 | 50.0000 | |

| qzeng-custom | SNP | ti | lowcmp_SimpleRepeat_quadTR_11to50 | hetalt | 66.6667 | 100.0000 | 50.0000 | 80.0000 | 1 | 0 | 1 | 1 | 1 | 100.0000 | |

| qzeng-custom | SNP | ti | lowcmp_SimpleRepeat_triTR_11to50 | het | 99.4125 | 98.9911 | 99.8375 | 43.7900 | 2453 | 25 | 2458 | 4 | 1 | 25.0000 | |

| qzeng-custom | SNP | ti | lowcmp_SimpleRepeat_triTR_51to200 | * | 66.6667 | 62.5000 | 71.4286 | 97.8261 | 5 | 3 | 5 | 2 | 1 | 50.0000 | |

| qzeng-custom | SNP | ti | lowcmp_SimpleRepeat_triTR_51to200 | homalt | 0.0000 | 0.0000 | 95.9184 | 0 | 2 | 0 | 2 | 1 | 50.0000 | ||

| qzeng-custom | SNP | ti | map_l250_m0_e0 | homalt | 67.7742 | 51.3761 | 99.5475 | 94.9738 | 224 | 212 | 220 | 1 | 1 | 100.0000 | |

| qzeng-custom | SNP | tv | HG002complexvar | hetalt | 97.3511 | 95.1613 | 99.6441 | 38.9130 | 295 | 15 | 280 | 1 | 1 | 100.0000 | |

| qzeng-custom | SNP | tv | HG002compoundhet | hetalt | 98.5292 | 97.2158 | 99.8786 | 21.9697 | 838 | 24 | 823 | 1 | 1 | 100.0000 | |

| qzeng-custom | SNP | tv | lowcmp_AllRepeats_lt51bp_gt95identity_merged | hetalt | 92.3077 | 92.3077 | 92.3077 | 82.1918 | 12 | 1 | 12 | 1 | 1 | 100.0000 | |

| qzeng-custom | SNP | tv | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_51to200bp_gt95identity_merged | homalt | 99.6872 | 99.5842 | 99.7904 | 53.5992 | 479 | 2 | 476 | 1 | 1 | 100.0000 | |

| qzeng-custom | SNP | tv | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt101bp_gt95identity_merged | * | 98.9647 | 99.8160 | 98.1279 | 69.9341 | 2170 | 4 | 2149 | 41 | 1 | 2.4390 | |

| qzeng-custom | SNP | tv | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt101bp_gt95identity_merged | het | 98.3855 | 99.7116 | 97.0943 | 71.3328 | 1383 | 4 | 1370 | 41 | 1 | 2.4390 | |

| qzeng-custom | SNP | tv | lowcmp_SimpleRepeat_diTR_51to200 | * | 80.0000 | 76.9231 | 83.3333 | 97.2758 | 20 | 6 | 20 | 4 | 1 | 25.0000 | |

| qzeng-custom | SNP | tv | lowcmp_SimpleRepeat_diTR_51to200 | het | 72.7273 | 70.5882 | 75.0000 | 97.7654 | 12 | 5 | 12 | 4 | 1 | 25.0000 | |

| qzeng-custom | SNP | tv | lowcmp_SimpleRepeat_quadTR_11to50 | hetalt | 90.9091 | 100.0000 | 83.3333 | 68.4211 | 5 | 0 | 5 | 1 | 1 | 100.0000 | |

| qzeng-custom | SNP | tv | lowcmp_SimpleRepeat_quadTR_51to200 | homalt | 90.9091 | 100.0000 | 83.3333 | 94.2857 | 6 | 0 | 5 | 1 | 1 | 100.0000 | |

| qzeng-custom | SNP | tv | lowcmp_SimpleRepeat_triTR_11to50 | het | 99.1334 | 99.1581 | 99.1088 | 47.3320 | 2120 | 18 | 2113 | 19 | 1 | 5.2632 | |

| qzeng-custom | SNP | tv | tech_badpromoters | * | 93.8212 | 95.8333 | 91.8919 | 51.6340 | 69 | 3 | 68 | 6 | 1 | 16.6667 | |

| qzeng-custom | SNP | tv | tech_badpromoters | homalt | 96.0692 | 94.8718 | 97.2973 | 51.3158 | 37 | 2 | 36 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | lowcmp_AllRepeats_lt51bp_gt95identity_merged | hetalt | 96.8972 | 93.9872 | 99.9932 | 58.6098 | 14490 | 927 | 14606 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt51bp_gt95identity_merged | het | 99.5541 | 99.2049 | 99.9057 | 74.5256 | 2121 | 17 | 2119 | 2 | 1 | 50.0000 | |

| raldana-dualsentieon | INDEL | * | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_lt51bp_gt95identity_merged | hetalt | 96.8228 | 93.8496 | 99.9905 | 28.4361 | 10422 | 683 | 10501 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | lowcmp_SimpleRepeat_diTR_11to50 | hetalt | 96.7068 | 93.6325 | 99.9899 | 31.2313 | 9808 | 667 | 9890 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | lowcmp_SimpleRepeat_quadTR_51to200 | hetalt | 96.6754 | 93.6402 | 99.9140 | 32.5015 | 1119 | 76 | 1162 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | map_l100_m0_e0 | het | 97.3501 | 97.0617 | 97.6401 | 84.2716 | 991 | 30 | 993 | 24 | 1 | 4.1667 | |

| raldana-dualsentieon | INDEL | * | map_l250_m1_e0 | het | 93.2292 | 94.2105 | 92.2680 | 95.0218 | 179 | 11 | 179 | 15 | 1 | 6.6667 | |

| raldana-dualsentieon | INDEL | * | map_l250_m1_e0 | homalt | 96.7136 | 94.4954 | 99.0385 | 93.9850 | 103 | 6 | 103 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | map_l250_m2_e0 | het | 93.8679 | 94.7619 | 92.9907 | 95.2339 | 199 | 11 | 199 | 15 | 1 | 6.6667 | |

| raldana-dualsentieon | INDEL | * | map_l250_m2_e0 | homalt | 96.8889 | 94.7826 | 99.0909 | 94.5893 | 109 | 6 | 109 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | map_l250_m2_e1 | het | 93.8967 | 94.7867 | 93.0233 | 95.3524 | 200 | 11 | 200 | 15 | 1 | 6.6667 | |

| raldana-dualsentieon | INDEL | * | map_l250_m2_e1 | homalt | 96.9163 | 94.8276 | 99.0991 | 94.6839 | 110 | 6 | 110 | 1 | 1 | 100.0000 | |

| ndellapenna-hhga | INDEL | * | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_gt200bp_gt95identity_merged | * | 87.6827 | 82.3529 | 93.7500 | 99.9627 | 14 | 3 | 15 | 1 | 1 | 100.0000 | |

| ndellapenna-hhga | INDEL | * | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_gt200bp_gt95identity_merged | het | 96.0000 | 100.0000 | 92.3077 | 99.3970 | 10 | 0 | 12 | 1 | 1 | 100.0000 | |

| ndellapenna-hhga | INDEL | * | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_gt200bp_gt95identity_merged | * | 66.6667 | 66.6667 | 66.6667 | 99.5739 | 2 | 1 | 2 | 1 | 1 | 100.0000 | |

| ndellapenna-hhga | INDEL | * | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_gt200bp_gt95identity_merged | het | 80.0000 | 100.0000 | 66.6667 | 96.9697 | 2 | 0 | 2 | 1 | 1 | 100.0000 | |

| ndellapenna-hhga | INDEL | * | map_l250_m0_e0 | * | 93.7500 | 96.1538 | 91.4634 | 99.7895 | 75 | 3 | 75 | 7 | 1 | 14.2857 | |

| ndellapenna-hhga | INDEL | * | map_l250_m0_e0 | het | 91.8919 | 96.2264 | 87.9310 | 97.3827 | 51 | 2 | 51 | 7 | 1 | 14.2857 | |

| ndellapenna-hhga | INDEL | * | map_l250_m1_e0 | homalt | 97.6959 | 97.2477 | 98.1481 | 94.5066 | 106 | 3 | 106 | 2 | 1 | 50.0000 | |

| ndellapenna-hhga | INDEL | * | map_l250_m2_e0 | homalt | 97.8166 | 97.3913 | 98.2456 | 95.1136 | 112 | 3 | 112 | 2 | 1 | 50.0000 | |

| ndellapenna-hhga | INDEL | * | map_l250_m2_e1 | homalt | 97.8355 | 97.4138 | 98.2609 | 95.2243 | 113 | 3 | 113 | 2 | 1 | 50.0000 | |

| ndellapenna-hhga | INDEL | * | segdup | hetalt | 85.5736 | 75.3846 | 98.9474 | 95.6039 | 98 | 32 | 94 | 1 | 1 | 100.0000 | |

| ndellapenna-hhga | INDEL | * | tech_badpromoters | * | 97.3333 | 96.0526 | 98.6486 | 91.8051 | 73 | 3 | 73 | 1 | 1 | 100.0000 | |