PrecisionFDA

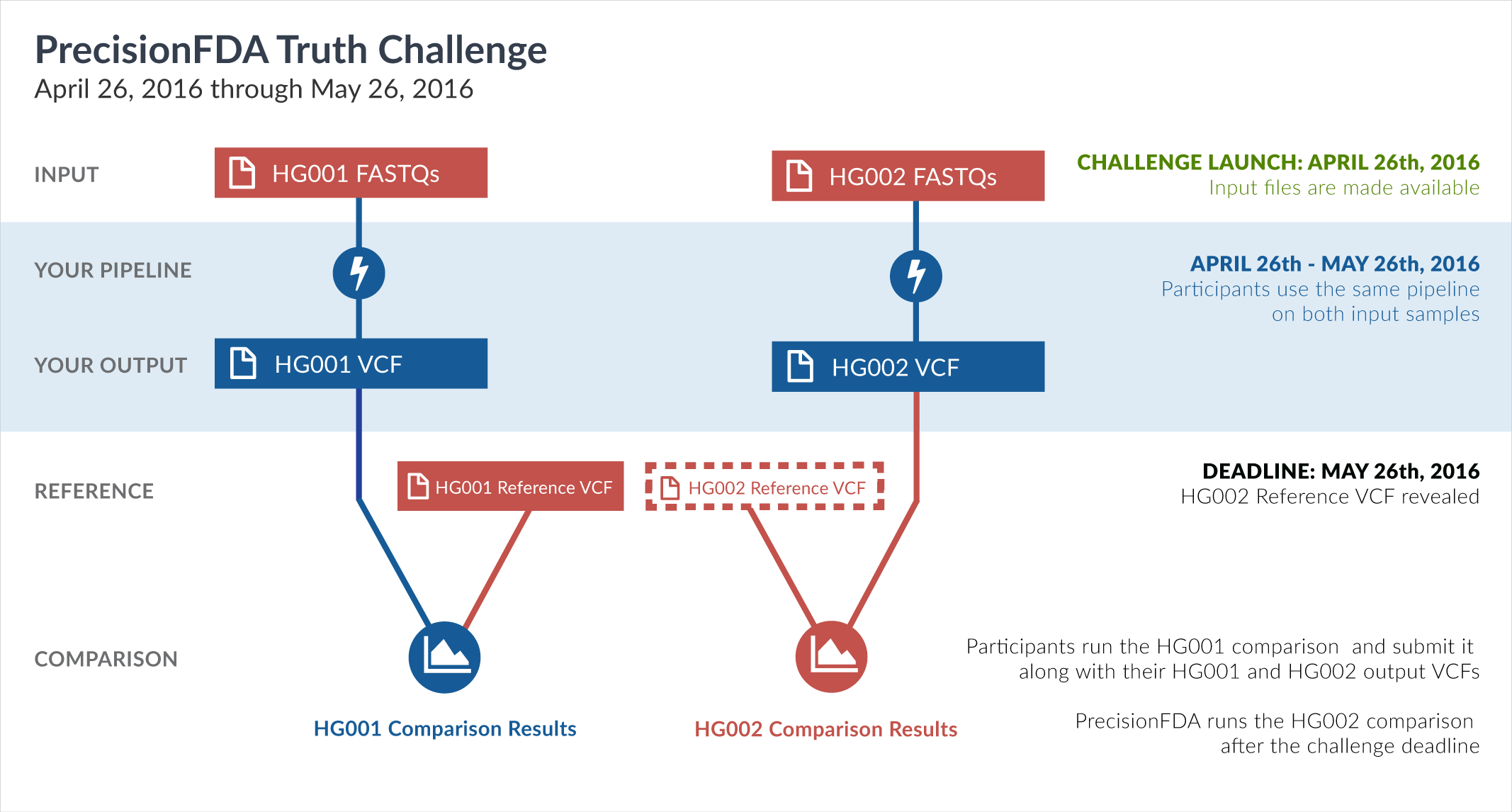

Truth Challenge

Engage and improve DNA test results with our community challenges

Explore HG002 comparison results

Use this interactive explorer to filter all results across submission entries and multiple dimensions.

| Entry | Type | Subtype | Subset | Genotype | F-score | Recall | Precision | Frac_NA | Truth TP | Truth FN | Query TP | Query FP | FP gt | % FP ma | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

1201-1250 / 86044 show all | |||||||||||||||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_SimpleRepeat_diTR_gt200 | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_SimpleRepeat_diTR_51to200 | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_SimpleRepeat_diTR_11to50 | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_Human_Full_Genome_TRDB_hg19_150331_all_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_Human_Full_Genome_TRDB_hg19_150331_all_gt95identity_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_lt51bp_gt95identity_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_lt101bp_gt95identity_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_gt200bp_gt95identity_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRlt7_51to200bp_gt95identity_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt51bp_gt95identity_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_lt101bp_gt95identity_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_gt200bp_gt95identity_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_Human_Full_Genome_TRDB_hg19_150331_TRgt6_51to200bp_gt95identity_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_Human_Full_Genome_TRDB_hg19_150331 | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_AllRepeats_lt51bp_gt95identity_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_AllRepeats_gt200bp_gt95identity_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | lowcmp_AllRepeats_51to200bp_gt95identity_merged | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | func_cds | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | decoy | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | HG002compoundhet | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | HG002complexvar | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | C16_PLUS | * | homalt | 0.0000 | 0.0000 | 0.0000 | 0 | 0 | 0 | 0 | 0 | |||

| raldana-dualsentieon | INDEL | * | tech_badpromoters | homalt | 100.0000 | 100.0000 | 100.0000 | 57.1429 | 33 | 0 | 33 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | * | segdupwithalt | homalt | 0.0000 | 100.0000 | 0 | 0 | 0 | 0 | 0 | ||||

| raldana-dualsentieon | INDEL | * | segdup | homalt | 99.2731 | 99.5833 | 98.9648 | 93.2239 | 956 | 4 | 956 | 10 | 9 | 90.0000 | |

| raldana-dualsentieon | INDEL | * | map_siren | homalt | 99.3604 | 99.3597 | 99.3611 | 79.7735 | 2638 | 17 | 2644 | 17 | 11 | 64.7059 | |

| raldana-dualsentieon | INDEL | * | map_l250_m2_e1 | homalt | 96.9163 | 94.8276 | 99.0991 | 94.6839 | 110 | 6 | 110 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | map_l250_m2_e0 | homalt | 96.8889 | 94.7826 | 99.0909 | 94.5893 | 109 | 6 | 109 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | map_l250_m1_e0 | homalt | 96.7136 | 94.4954 | 99.0385 | 93.9850 | 103 | 6 | 103 | 1 | 1 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | map_l250_m0_e0 | homalt | 97.9592 | 96.0000 | 100.0000 | 96.8545 | 24 | 1 | 24 | 0 | 0 | ||

| raldana-dualsentieon | INDEL | * | map_l150_m2_e1 | homalt | 98.1595 | 97.5610 | 98.7654 | 88.0266 | 480 | 12 | 480 | 6 | 3 | 50.0000 | |

| raldana-dualsentieon | INDEL | * | map_l150_m2_e0 | homalt | 98.2199 | 97.5052 | 98.9451 | 88.0424 | 469 | 12 | 469 | 5 | 2 | 40.0000 | |

| raldana-dualsentieon | INDEL | * | map_l150_m1_e0 | homalt | 98.1461 | 97.4026 | 98.9011 | 86.8269 | 450 | 12 | 450 | 5 | 2 | 40.0000 | |

| raldana-dualsentieon | INDEL | * | map_l150_m0_e0 | homalt | 97.8462 | 96.9512 | 98.7578 | 89.5182 | 159 | 5 | 159 | 2 | 2 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | map_l125_m2_e1 | homalt | 98.7047 | 98.4496 | 98.9610 | 85.2744 | 762 | 12 | 762 | 8 | 3 | 37.5000 | |

| raldana-dualsentieon | INDEL | * | map_l125_m2_e0 | homalt | 98.6859 | 98.4273 | 98.9460 | 85.1526 | 751 | 12 | 751 | 8 | 3 | 37.5000 | |

| raldana-dualsentieon | INDEL | * | map_l125_m1_e0 | homalt | 98.6977 | 98.3607 | 99.0371 | 84.0640 | 720 | 12 | 720 | 7 | 3 | 42.8571 | |

| raldana-dualsentieon | INDEL | * | map_l125_m0_e0 | homalt | 98.0600 | 97.8873 | 98.2332 | 86.1002 | 278 | 6 | 278 | 5 | 3 | 60.0000 | |

| raldana-dualsentieon | INDEL | * | map_l100_m2_e1 | homalt | 98.9836 | 98.8290 | 99.1386 | 83.0322 | 1266 | 15 | 1266 | 11 | 5 | 45.4545 | |

| raldana-dualsentieon | INDEL | * | map_l100_m2_e0 | homalt | 98.9674 | 98.8105 | 99.1249 | 82.9559 | 1246 | 15 | 1246 | 11 | 5 | 45.4545 | |

| raldana-dualsentieon | INDEL | * | map_l100_m1_e0 | homalt | 98.9792 | 98.7775 | 99.1817 | 81.8155 | 1212 | 15 | 1212 | 10 | 5 | 50.0000 | |

| raldana-dualsentieon | INDEL | * | map_l100_m0_e0 | homalt | 98.6220 | 98.4283 | 98.8166 | 82.4931 | 501 | 8 | 501 | 6 | 3 | 50.0000 | |

| raldana-dualsentieon | INDEL | * | lowcmp_SimpleRepeat_triTR_51to200 | homalt | 96.9072 | 100.0000 | 94.0000 | 52.3810 | 47 | 0 | 47 | 3 | 3 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | lowcmp_SimpleRepeat_triTR_11to50 | homalt | 99.9303 | 100.0000 | 99.8608 | 45.9493 | 2152 | 0 | 2152 | 3 | 3 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | lowcmp_SimpleRepeat_quadTR_gt200 | homalt | 0.0000 | 100.0000 | 0 | 0 | 0 | 0 | 0 | ||||

| raldana-dualsentieon | INDEL | * | lowcmp_SimpleRepeat_quadTR_51to200 | homalt | 98.1964 | 99.5935 | 96.8379 | 59.5200 | 490 | 2 | 490 | 16 | 15 | 93.7500 | |

| raldana-dualsentieon | INDEL | * | lowcmp_SimpleRepeat_quadTR_11to50 | homalt | 99.7452 | 99.9341 | 99.5570 | 56.8831 | 6068 | 4 | 6068 | 27 | 27 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | lowcmp_SimpleRepeat_homopolymer_gt10 | homalt | 97.6744 | 100.0000 | 95.4545 | 99.9595 | 21 | 0 | 21 | 1 | 0 | 0.0000 | |

| raldana-dualsentieon | INDEL | * | lowcmp_SimpleRepeat_homopolymer_6to10 | homalt | 99.9381 | 99.9734 | 99.9027 | 56.1708 | 11293 | 3 | 11293 | 11 | 11 | 100.0000 | |

| raldana-dualsentieon | INDEL | * | lowcmp_SimpleRepeat_diTR_gt200 | homalt | 0.0000 | 100.0000 | 0 | 0 | 0 | 0 | 0 | ||||